Just a small data point: at least with AUv3, I’ve found that Logic does split the buffers rather than using the AU parameter ramping stuff. (REAPER on the other hand, does use the AU parameter ramping stuff.)

So Apple is not using their own framework? Ironic ![]() they probably do that because as I said there’s no way to guarantee that plugins properly read the sample accurate changes, and they want to have accurate automations regardless.

they probably do that because as I said there’s no way to guarantee that plugins properly read the sample accurate changes, and they want to have accurate automations regardless.

I’m telling you DAWs have to implement all sorts of hacks to provide a good experience. Plugin dev is the wild west and users will always blame the DAW.

My bad for abusing of the word “smoothing”. But I think we all get the idea: “jumps” that you would hear at large buffer sizes become inaudible at small buffer sizes. Because yes many plugins have internal smoothing but not all.

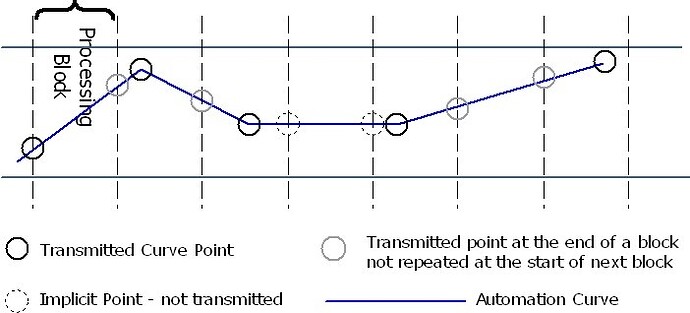

it’s kind of subtle, but VST3 parameter edits aren’t really synchronous parameter changes. They’re iendpoints of an automation curve: VST 3 Interfaces: IParamValueQueue Class Reference.

The queues are synchronous with the audio buffers in the sense that they both refer to the same time frame, and they’re both contained in the same structure passed through an argument to the process callback. The callback has parameter changes sent to it synchronously, that is, without the need of any thread synchronization. (Also, in VST3 terminology an edit is a UI operation.)

in addition to asimilon’s statement: what is even the point of backwards compatibility when it comes to JUCE? are people really using the latest JUCE branch and somehow combining it with compiling VST2 or other old standards? i thought JUCE doesn’t actively provide VST2 support anymore. isn’t it bug-prone to still use JUCE for compiling VST2 then or am i missing something? i always thought people who still wanna compile VST2 just use a way older JUCE branch, but correct me if I’m wrong.

(not to be confused with backwards-compatibility in hosts btw… it’s important that they keep supporting VST2, at least the reasonable ones, due to lots of emotions being attached to certain technology from the VST2-era and even whole functionality getting lost if it gets lost. also not to be confused with hosts that don’t even have “forwards-compatibility” yet, like OBS where you can’t import VST3)

Yes, and there’s no “somehow combining” at the moment: it works out of the box (at least in my own daily experience)

Same here. As long as you have the VST2 SDK (and of course a signed agreement with Steinberg to distribute) it "just works"™.

There was a very strong case for VST2 hanging around pre-Ableton Live 10 and there was still no VST3 support in one of the major DAWs, but now I’m struggling to come up with decent justifications. The best I can do is “there might still be some people using Live 9”, but those people probably aren’t buying plugins anyway if they haven’t even upgraded their host whilst 2 major versions have been released in the meantime, so why would I want to accommodate someone unlikely to compensate me for the effort?

Remember also that Juce is used to develop hosts. And hosts still need to support VST2 as there are still major plugin vendors that only provide VST2, like UAD for example.

To come back to the topic:

What wasn’t mentioned so far is, that it would be good if JUCE made hosts could send sample accurate automations as well.

To warm up the topic,

there is a very important argument for sample accurate automation.

Even with the best smoothing algorithm in place in your plugin, your plugin will render and therefore sound slightly different with different block sizes. That can sometimes be annoying and is unreliable for the user if he opens a session somewhere else where the block size setting of the DAW is not 128 when he was recording but 2048 for having more CPU for mixing. So especially when you are doing automation which is synchronous to beats, this change can really affect the sound of your music.

When you have the time of occurrence information of each automation event, this would be a thing of the past and all monitoring/bounces, whether being done at 128 samples of buffer size or at 2048, will sound exactly the same, which any user would expect.

One could argue that’s a problem for host authors and not plugin developers. One of the reasons you can’t rely on block size to be constant during playback is because hosts will segment their buffers on automation and other events so that they’re handled consistently irrespective of the block size being sent out to hardware.

If your parameter smoothing is implemented correctly it shouldn’t change with block size, but with the time of arrival of the events. And since you can’t control that (either as a plugin developer in general, or a JUCE developer where the events are all batched at the start of the rendering callback) it’s not something you can really deal with.

Granted it’s not perfect, but it’s something to be aware of.

IMO there’s a way to fix this at the API level which looks something like

class Event {

public :

enum Kind {

Audio,

Midi,

Parameter,

};

Kind kind() const;

AudioEvent audio() const;

MidiEvent midi() const;

ParameterEvent parameter() const;

private :

std::variant<AudioEvent, MidiEvent, Parameterevent> event;

};

// ...

void MyAudioProcessor::processPerfect (Event event) {

switch(event.kind()) {

case Event::Kind::Audio: { processAudio(event.audio()); break; }

case Event::Kind::Midi: { processMidi(event.midi()); break; }

case Event::Kind::Parmeter: { processParameter(event.parameter()); break; }

}

}

And your plugin implements processAudio, processMidi, processParameter, and the wrapping code is responsible for segmenting/sorting the incoming data from the API to deliver events in time. The annoying implementation part is that you can’t guarantee these events are given to the plugin in sorted order, and in some APIs (looking at you VST3) the parameter events aren’t really events but slices of of piece-wise linear function that you need to handle accordingly.

this is definitely true! i can personally confirm that i even noticed this issue before i knew what parameter smoothing was, before i have written a single line of code in my life. when i was making music at some point i noticed that with certain filter plugins you just can’t sweep back to the original value at the end of a build up, without putting the automation end point slightly before the actual drop, if you wanted the transition to be mostly clean, but you also noticed that it was always a bit down to chance whether or not it will turn out perfectly fine, so i always just tried to approx how much i’d have to negatively delay the automation to make it work most of the time. i always felt like these kinda things keep my music from being super-straight like some crazy dubstep producers and wondered how they got their transitions so perfect all the time. i think this is part of the reason why nowadays people don’t work with long effect chains on stuff anymore, but just throw samples into the daw. it is the only way to make sure that everytime your loop repeats it actually sounds exactly the same as you have planned. in that sense: juce’ missing sample accuracy has a big impact on the way music is made and it’s not a good impact

yeah. Perhaps JUCE could leave the existing juce::AudioProcessor as-is. And in addition, provide a new alternative that provides sample-accurate parameter events also to the processBlock. This would allow people to migrate to the new style at their leisure.

I don’t think mixing the old-style and the new style in the same class is a good idea, because it will needlessly complicate the processor.

void processBlock (juce::AudioBuffer<float>&, juce::EventBuffer&) override;

Actually sample accurate wouldn’t be enough, it must also include the ramp information if possible (if the plugin format has a support for that).

But it wouldn’t make sense if any developer makes an own implementation how this is handled.

The best way is if JUCE would introduce some kind of helper class, similar to SmoothedValue, which makes it easy to retrieve the correct parameter-value for each sample, via .getNextValue()

Maybe we can just wait it out until MIDI 2.0 becomes the new quasi standard for automation… (scnr)

IMHO a protocol similar to the VST3 SDK would make sense:

This would mean simply adding some functions to the existing RangedAudioParameter subclasses (and the templated get() function):

ValueType get (int sampleNum=0); // fully backwards compatible

int getNumCurvePoints() const;

int getSamplesPositionOfCurvePoint (int curvePointIndex) const;

And then you can create helper functions like the one @chkn was probably thinking of:

static inline void multiplyWithParameter (juce::AudioBuffer<float>& buffer,

const juce::AudioParameterFloat& parameter,

float factor=1.0f);

No need for changes in processBlock’s signature at all…

That’s a nice diagram!

Yes, that is exactly how envisioned it, love this API.

This shouldn’t be a breaking change at all, as its purely additive and will work the same way for hosts/plugins that didn’t support it previously.

Usually, one can save a lot of CPU by recognizing that only a few parameters have changed since the previous processBlock.

ValueType get (int sampleNum=0); // fully backwards compatible

under this scheme, how would we know which parameters have updates?

Do you envision that we poll all parameters in every processBlock?

How would we know where to split the processing buffer? Do we have to loop over all the parameters to figure out which parameter event is ‘next’, in order to split the buffer at that point?

This API works exactly the same as the original AudioProcessorParameter. If you need to know the last parameter value, you simply keep it from the last processBlock run.

No, this method is a method of AudioProcessorParameter, so it is per parameter.

Usually you would get the parameter once. And if getNumCurvePoints() returns 1, it means the parameter is constant in that block.

Note you can still just call the value at sample 0 and you get the same behaviour as you have now.

There is no need to split the buffer any more, because the information for every parameter at every sample position can be retrieved.