Hello,

This may be something nobody else wants to do, I’m not sure, but I have a need for buttons on my MIDI processing VST which will eventually trigger chords. Right now, to test this out, I have a simple plugin based on the Audio Demo Plugin. It transposes the MIDI passed through it by 5 semitones.

My aim at this stage is to trigger just one note, note number 80, G# in the 4th octave, which should then be transposed to 85, C# in the 5th octave. I have a button named ‘Note On Button’ which triggers:

[code]MidiBuffer chordOutput;

chordOutput.addEvent(MidiMessage::noteOn(1,80,(uint8)100),0);

AudioSampleBuffer buffer = AudioSampleBuffer (512,2);

getProcessor()->processBlock(buffer, chordOutput);[/code]

and a ‘Note Off Button’ that triggers:

[code]MidiBuffer chordOutput;

chordOutput.addEvent(MidiMessage::noteOff(1, 80),0);

AudioSampleBuffer buffer = AudioSampleBuffer (512,2);

getProcessor()->processBlock(buffer, chordOutput);[/code]

My processBlock method is like this:

[code]void ZS1AudioProcessor::processBlock (AudioSampleBuffer& buffer, MidiBuffer& midiMessages)

{

const int numSamples = buffer.getNumSamples();

int channel, dp = 0;

// Go through the incoming data, and apply our gain to it...

for (channel = 0; channel < getNumInputChannels(); ++channel)

buffer.applyGain (channel, 0, buffer.getNumSamples(), gain);

// Now pass any incoming midi messages to our keyboard state object, and let it

// add messages to the buffer if the user is clicking on the on-screen keys

//Any MIDI I play will be picked up and then transposed before passed out of the plugin.

keyboardState.processNextMidiBuffer (midiMessages, 0, numSamples, true);

// and now get the synth to process these midi events and generate its output.

synth.renderNextBlock (buffer, midiMessages, 0, numSamples);

// Apply our delay effect to the new output..

for (channel = 0; channel < getNumInputChannels(); ++channel)

{

float* channelData = buffer.getSampleData (channel);

float* delayData = delayBuffer.getSampleData (jmin (channel, delayBuffer.getNumChannels() - 1));

dp = delayPosition;

for (int i = 0; i < numSamples; ++i)

{

const float in = channelData[i];

channelData[i] += delayData[dp];

delayData[dp] = (delayData[dp] + in) * delay;

if (++dp > delayBuffer.getNumSamples())

dp = 0;

}

}

delayPosition = dp;

// In case we have more outputs than inputs, we'll clear any output

// channels that didn't contain input data, (because these aren't

// guaranteed to be empty - they may contain garbage).

for (int i = getNumInputChannels(); i < getNumOutputChannels(); ++i)

buffer.clear (i, 0, buffer.getNumSamples());

// ask the host for the current time so we can display it...

AudioPlayHead::CurrentPositionInfo newTime;

if (getPlayHead() != 0 && getPlayHead()->getCurrentPosition (newTime))

{

// Successfully got the current time from the host..

lastPosInfo = newTime;

}

else

{

// If the host fails to fill-in the current time, we'll just clear it to a default..

lastPosInfo.resetToDefault();

}

MidiBuffer output;

MidiBuffer::Iterator mid_buffer_iter(midiMessages);

MidiMessage m(0xf0);

int sample;

while(mid_buffer_iter.getNextEvent(m,sample))

{

if (m.isNoteOn()) {

const int ch = m.getChannel();

const int tnote = m.getNoteNumber() + 5;

const uint8 v = m.getVelocity();

output.addEvent(MidiMessage::noteOn(ch,tnote,v),sample);

}

else if (m.isNoteOff()) {

const int ch = m.getChannel();

const int tnote = m.getNoteNumber() + 5;

const uint8 v = m.getVelocity();

output.addEvent(MidiMessage::noteOff(ch,tnote,v),sample);

}

}

midiMessages.clear();

midiMessages = output;

}[/code]

Here’s the problem. I can see and hear the midi and audio buffers I made with the [color=#FF0000]Note On Button[/color] but [color=#FF0000]these messages are not transposed and are not output from the synth[/color]. The strange thing is that midi from pressing the Juce keyboard and MIDI passed through my VST to another channel (see image below) are both correctly transposed and output. I cannot think why my buffers should be treated any differently.

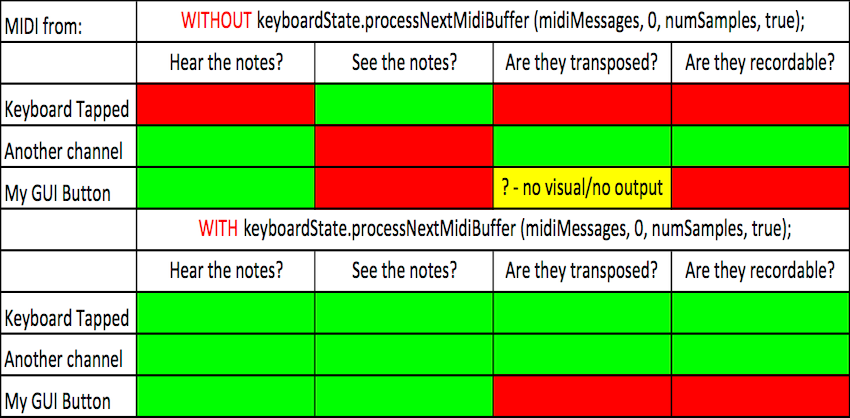

I tried also omitting the line keyboardState.processNextMidiBuffer (midiMessages, 0, numSamples, true);

Observations are summarised below:

[color=#FF0000]Without[/color] the line

keyboardState.processNextMidiBuffer (midiMessages, 0, numSamples, true); the note from my button [color=#FF0000]is audible[/color] but [color=#FF0000]doesn’t[/color] get displayed on the MIDI Keyboard. Pressing the MIDI keyboard [color=#FF0000]displays the notes[/color] but [color=#FF0000]doesn’t trigger the synth[/color]. Neither my GUI button’s note or any notes I input on the keyboard are recordable by another channel in Ableton. Lastly, the midi I pass to another channel in Live is correctly transposed and recorded. I hope this info can help somebody help me out.

It seems the buffers I created with the buttons are somehow removed in processBlock.

Maybe the problem is that I should send the Note On and Note Off with the desired gap in the same buffer, adjusting the sampleNumber accordingly?

void MidiBuffer::addEvent (const MidiMessage& m, const int sampleNumber) If this is the problem, how do I equate desired notelength with sampleNumber?

I need the button pressed in my GUI to trigger a chord that the DAW can record. How can this be achieved? Is it even possible? Jules, if you are reading can you help? If not, is there a way to ‘press the MIDI keyboard’ programatically with my button or somehow achieve the desired result?

Hoping for a solution,

Dave