I don’t want midi input to pass to midi output.

I am making a type of arpeggiator.

I tried midibuffer.clear()

But it also erases the output midi I have already put into the buffer.

If I understand your question…

You can make a copy of the incoming MidiBuffer, then clear the incoming and add output to it while processing the copy.

I have tried that but my problem is that processBlock() gets called so frequently that whatever I added to the buffer will get cleared the next time around before going out.

The host controls when the processBlock() is called. So, you must code accordingly. This means that you must keep your own buffer of the incoming data (most likely a FIFO). This will need to be at class scope, and not a local variable in the processBlock(). That will allow you to access it for each call of processBlock().

Note: you can not fall behind though, unless you are intentionally creating a delay. You must process and deliver each block in step with the host calls to processBlock().

Also, note that the host can call processBlock() with varying sizes. Your code must accommodate that.

I want to create outgoing data without the incoming data getting mixed in.

i think the juce team should make a statement on how to use midi buffers in general. it’s just super confusing. you can’t index midi events to change them. you can add events for some reason, even though everyone always says never allocate in processBlock. then what is this? i want clear answers ![]() what can and what can’t you do with a midi buffer? what are the recommended ways in 2023 when writing various types of midi effects and/or synths?

what can and what can’t you do with a midi buffer? what are the recommended ways in 2023 when writing various types of midi effects and/or synths?

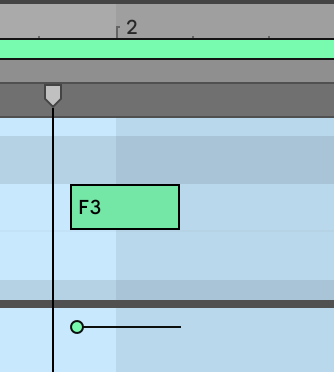

In my MIDI processing plugin, I have both an incoming MidiBuffer and outgoing MidiBuffer at the class level (as @bwall mentioned). When MIDI comes in, I merge the incoming buffer with my inBuffer (since mine may or may not be empty yet), process that midi and put my results in my outBuffer, and then swap it with the incoming MidiBuffer that was passed in, thereby sending it out. (simplified explanation…)

You don’t need to have the incoming data mixed with the outgoing data unless you specifically want to put it back in.

Copy the incoming MIDI to your inBuffer.

Work with your inBuffer to determine what events to generate, and stick them in your outBuffer.

Swap your outBuffer with the incoming MidiBuffer and whatever you’ve generated will be sent downline, without any incoming Midi events (unless you copy them from your inBuffer to your outBuffer).

Something like that… ![]()

You can call MidiBuffer::ensureSize with a reasonable guess about how much preallocated size you are going to need before the real time processing happens.

yeah but what is a reasonable guess? and what happens if the user ever trys something unreasonable? i like to set all my processBlock methods to noexcept but i couldn’t faithfully do that no knowing if nasty midi files could break the plugin. also this was just an example. i think there should be more resources on how to use the midi buffer in general. some example code for each of midibuffer’s methods and intended workflows

The MidiBuffer is just a wrapper around Juce::Array, so pretty much anything that applies to Juce::Array, would apply to Juce::MidiBuffer also. It’s of course a bit difficult to make guesses about the needed preallocation size, but I would myself expect something like a “few thousand” MIDI messages worth would usually be enough. And if the user feeds in more data, then you’d just live with the possibility that if you need to allocate more, there’s a chance an audio glitch might happen.

I have tried that but my problem is that processBlock() gets called so frequently that whatever I added to the buffer will get cleared the next time around before going out.

What you say doesn’t make sense. In processblock

void processBlock (AudioSampleBuffer& buffer, MidiBuffer& midiBuffer)

the content of midiBuffer is only valid for this particular call which represent a tiny fraction in time.

At a sample freq of 44 100 the buffer size is typcally 441 samples which represents a time of 10ms.

So if you connect a piano keyboard to your arpeggiator and press a key, then immediately at the next call to processBlock

there will be a note-on midi event available in the midibuffer.

The next call it will be gone. I.e it’s not permanently stored in the midibuffer. There is no “next time around before going out”. It’s going out immediately, before next time.

Now if you e.g want to make a chord out of the lonely note you detected, you can add some additional note-on:s with other note numbers to the midiBuffer.

You can decide if you want to keep the original note or not. Do a midiBuffer.clear before you add your new notes to get rid of the original note.

The result will be heard as notes being played simultaneously. If you have a sampler or liek connected to the output of your plugin/daw of course.

If you want the notes to be separated in time, as I assume from your talk of making an arpeggiator, you need to store the detected note-on (or at least it’s note number).

Then you have to calculate the number of samples that should pass before your added notes should play. For a delay of 1 second this means 44 100 samples, which equals 100 buffer calls in our example.

So you detect the note-on, then possibly store it. Wait and count the number of processBlock calls. Then at the 100:th call you add a note-on to the midiBuffer. Then you will hear the original note and 1 sec later your generated arpeggio note.

Hope this helps…

“Normal” midi events are small enough to not allocate. We’re talking about note-on, note-offs cc-params and stuff like that. I.e most of the output from a live keyboard being played.

e.g

midiBuffer.addEvent(MidiMessage::noteOn (1, 65, 0.7f))

doesn’t allocate it just creates a message on the stack and copies it to the midiBuffer

When dealing with midi messages of unknown source e.g midi files where there could be plenty of large events like text meta events and even sys ex events you should always check their sizes before showing them up the midibuffer in a process call. Typically do a check like

if (msg.numBytes <= sizeof msg.data)

{

//midi event small enough to not allocate

}

sizeof msg.data might looks dubious and it is. msg.data is just the size of a (void *) pointer, i.e 8 bytes.

There is already a function for this in MidiMessage.h

inline bool isHeapAllocated() const noexcept { return size > (int)sizeof(packedData); }

with a meaningful name, but unfortunately it’s made private for reasons I can’t understand.

What do you mean you cant index index midi events?

Helps a lot and the fact that I wasn’t making sense helped me realize there was a bug.

My processing code was called getting twice per block.

Is there a way to get better than sample-accurate timing.

Because

auto message = juce::MidiMessage::noteOn (1, 65, (juce::uint8) 100);

message.setTimeStamp(beepSample);

processedBuffer.addEvent(message, beepSample);

This gets into Ableton ever so slightly before the beat

and this

auto message = juce::MidiMessage::noteOn (1, 65, (juce::uint8) 100);

message.setTimeStamp(beepSample+1);

processedBuffer.addEvent(message, beepSample+1);

comes in to Ableton ever so slightly after the beat.

I have to zoom in quite a bit to see it, but I’d prefer perfect.

This is a midi VST I’m creating.

For a start you could skip the line

message.setTimeStamp(beepSample);

while the message timestamp is ignored by addEvent.

That is if you don’t intend to use this actual MidiMessage further down in your function.

How do you know it’s not on the beat? Who decides the beat, Ableton? Maybe it’s Ableton that’s not keeping up? ![]()

Without being an expert in sample-accuracy, I would reckon your result as being fully acceptable.

If you want, you could do an interesting test by looking up the exact same sample in the AudioSampleBuffer and set it to something != 0 (assuming it’s silent o/w)

using

AudioSampleBuffer::getWritePointer (int channelNumber, int sampleIndex)

to do something like

e.g *buffer->getWriteBuffer(0, beepSample) = 1.0f;

Zooming in the difference in the resulting audio and midi stream would possibly tell you more about the real sample-accuracy. And any transient (like this) will be probably be smeared out anyway making talk about sub sample accuracy pretty pointless…

And of course, don’t try this 1.0f dirac sample at home with your loudspeakers at full… ![]()

Just curious, but did you ever figure something out? I’m having a similar issue in Ableton, where my note comes in just slightly before like this.