Linear phase EQ - problem

Hello,

I am not sure if it’s more DSP issue or just programming.

I made some simple plugins. One that generates simple one impulse per buffer, like 1, 0, 0, 0…

And another plugin that calculates FFT for that impulse and draws the freq bin magnitudes and phase shifts.

The aim is to put between those plugins any EQ and make measurements.

And everything works great until I use some linear phase EQ. I tried it with various EQs and get the same results. Here to demonstrate my issue I use Fabfilter Pro-Q2.

But to the point. When I use standard „zero latency” mode I get good results. And it looks like that:

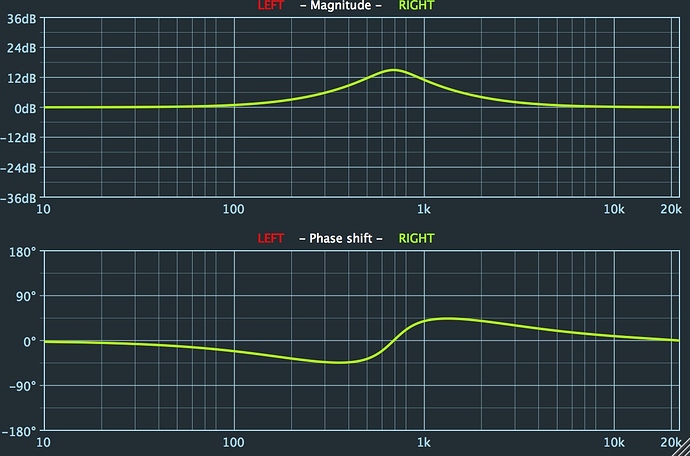

But when I set to „linear phase” or „natural phase” mode I get that shit:

And no matter if I tweak any freq on EQ, even if I flat EQ without any correction, the phase draft is still nasty like that.

I tried to debug it, and found out that when I use „linear phase” (without equing, just flat eq) it moves my impulse from the beginning of buffer (1, 0, 0…) to somewhere in the middle of buffer (0, 0, … 0, 1, 0… 0, 0). I have no idea why it happens. But for buffer size 8192 it moves my impulse exactly 1024 samples forward (like ¼ of buffer size)

What probably should be mentioned: I have constant buffer size for my impulse and for my FFT plugins (8192) and it’s different then my plugin host has set. I am not sure if I have the same issue when all buffer sizes are the same, I need to check it today evening. But even though it doesn’t solve my problem, I don’t want use plugin host’s buffer size cause it’s small and my graph resolution for lower end is not satisfying me.

Could anyone give some hint?